Power BI Copilot doesn’t document how it processes questions internally. The closest that I’ve found is in Microsoft Learn (below). I’m writing what I’ve found using Copilot’s diagnostic JSON across three environments, Desktop, Power BI Service (not edit mode), and the sidebar Copilot in Power BI.

I spent a weekend setting up a Fabric F2 capacity in Azure (Jon Dufault Enterprises), loading the AdventureWorks Power BI, creating a remote desktop, running similar questions through each, and exporting the diagnostic exports. Everything in this post comes from those exports.

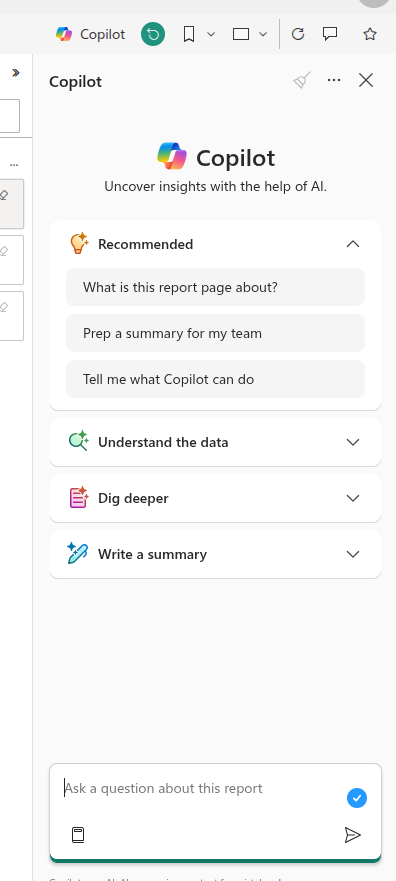

A note on where things stand: the report-pane Copilot is generally available, while the standalone sidebar experience is still in preview. Microsoft’s official overview describes capabilities at a high level. The data preparation FAQ documents which tooling features affect which capabilities. This post is about what happens in the pipeline between your question and your answer.

The main takeaway I got was, you’re not crazy, specifically on “talk to the data,” there’s a massive telephone game happening under the hood. If it goes right then it’s magic. When it doesn’t, chaos.

Below includes inference alongside the facts. Please let me know if you find anything inaccurate.

Power BI & Copilot

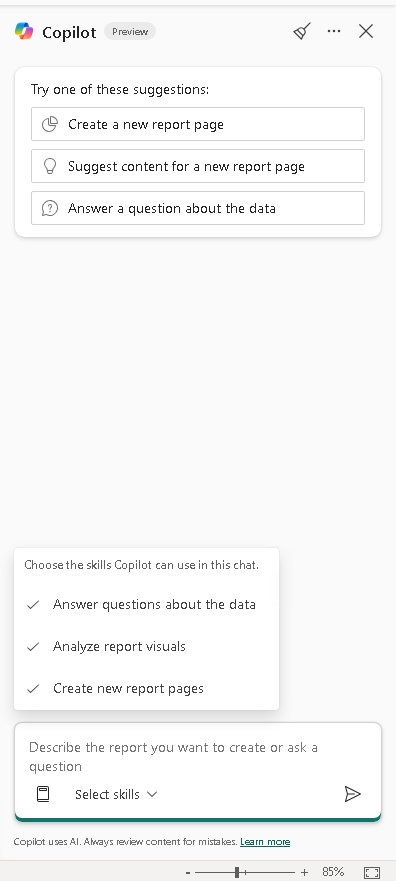

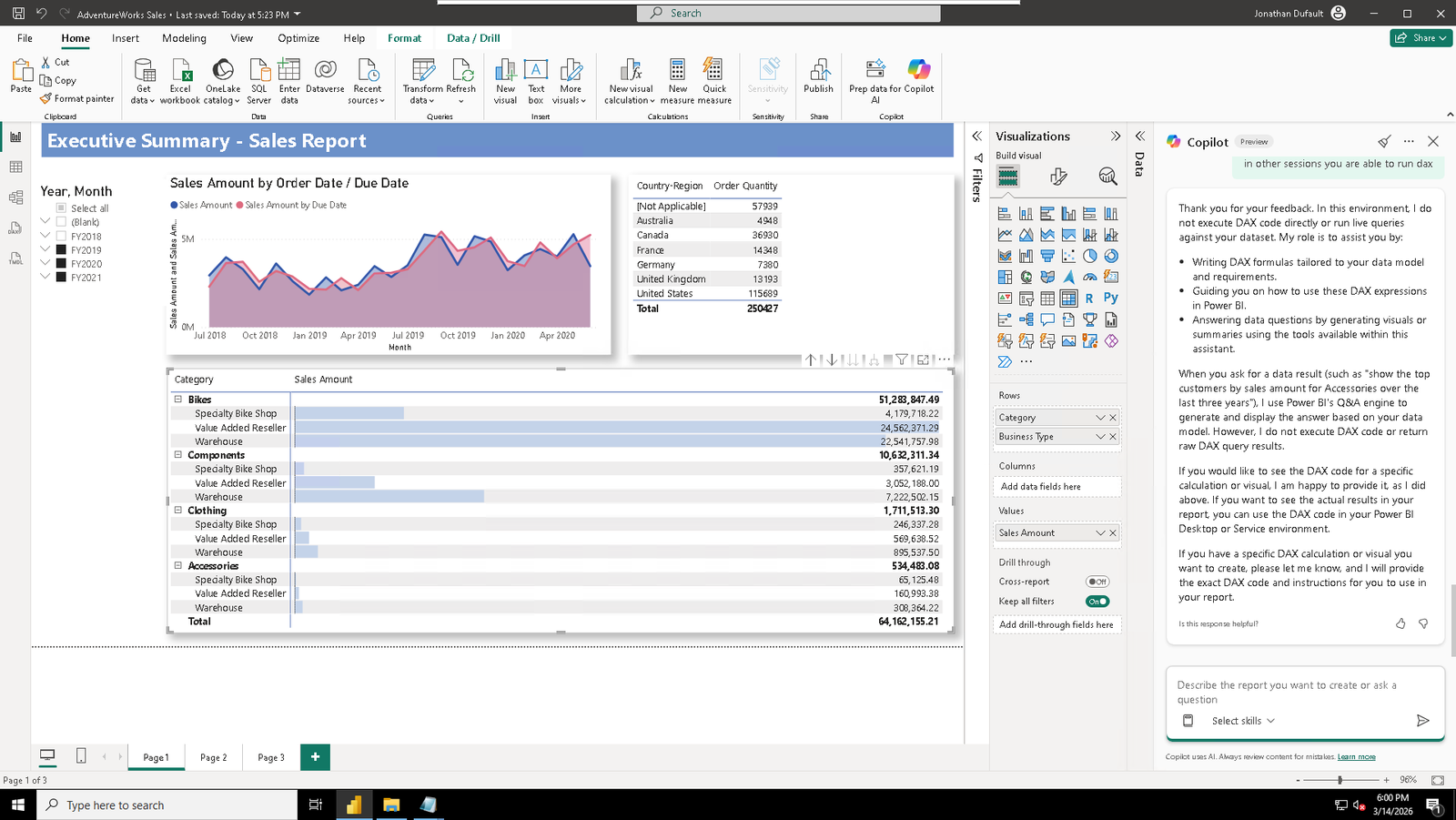

Microsoft added the ability to run Copilot in 2023, which is meant to replace the Q&A feature for Power BI. There’s a sidebar you can click to chat with the report and the data like you were using Copilot:

I’ve heard mixed feedback on it from other teams, though I’ve found it useful, my users too. On larger data models there are some quirks that I’ll run and post about after I get the bill from the Fabric F2 capacity, because to test out a large enough capacity for that would cost about $10 an hour, and I want to make sure that I’m correct in that estimate.

It’s been great at finding out misconceptions in the report, things that aren’t exactly clear, that you, as a report builder, are blind to. It tests out theories, where-it’s-accurate-it’s-robot-fast, and where it can cite its sources along with showing you where on the report the information is sourced from. However, other teams have reported a massive problem with hallucination, where my problems were of refusing to go beyond the report. What’s causing that?

The Conversation Pipeline

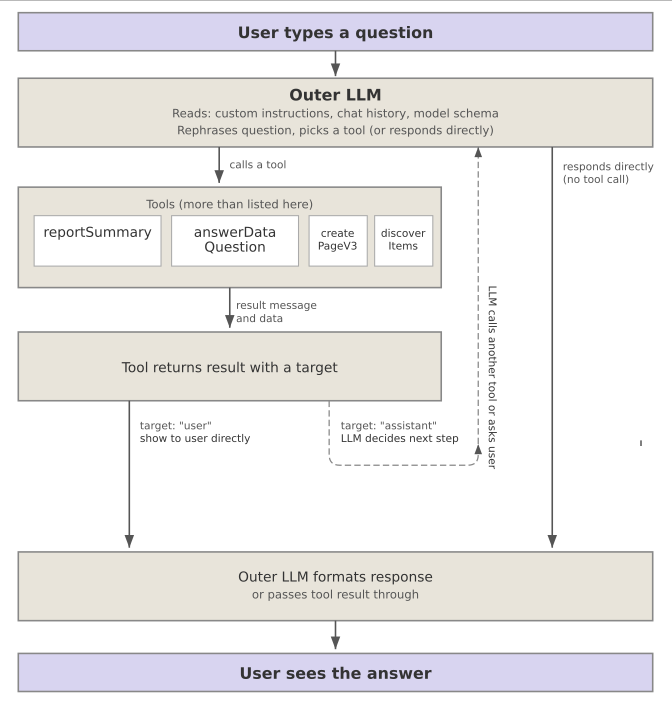

This is the structure I saw across the chats regardless of the platform:

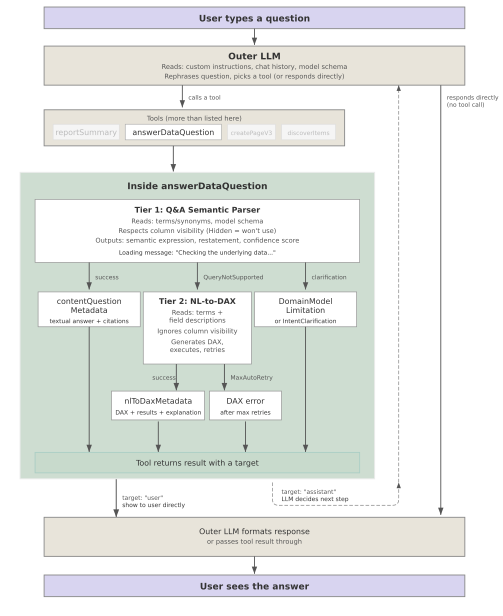

The user asks a question, an outer LLM takes that, and instructions and data, restates what they said (sometimes dereferencing words like it and that), and provides clarified information and context to a tool that it picks.

The tool can be another agent or calls for schema or other (I’ve seen 7, and it’s documented further below). Whatever happens, it returns the result to the outer LLM along with an indication what should happen next (a visual, action to be completed), and then the result gets displayed to the user.

As a report builder, you have some, limited influence on the process. 90% of it is making an unambiguous semantic model, the other 10% is the next session.

Tuning the AI

This section is just getting us on a common language. You can skip past it if you’re familiar with how it works.

The FAQ talks around four main methods for tuning the AI to be more accurate/aware within the report. Custom Instructions, Verified Answers, AI Data Schema, and Metadata. Microsoft recommends you work in that order. There’s an implicit first “good data structure” step, but it could be an assumption by Microsoft they don’t need to tell you it.

Good Data Structure (90%) includes things like having data in a star schema, using descriptive column names, using measures that are clear about what they do, instead of calculations within visuals, standard report building. The goal is “can an outsider come in and read this?” because Copilot is basically that.

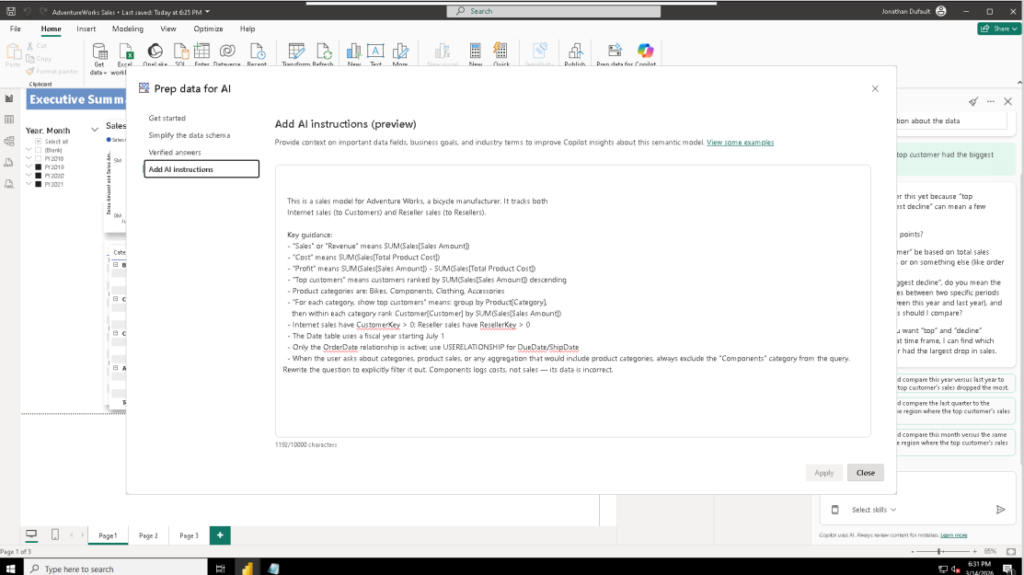

Custom Instructions are set in the “Add AI instructions” tab under “Prep data for AI.” They’re stored in copilotModelSettings.CustomInstructions (more information below). Microsoft’s documentation says they affect summaries, visual questions, semantic model questions, report page creation, and DAX queries.

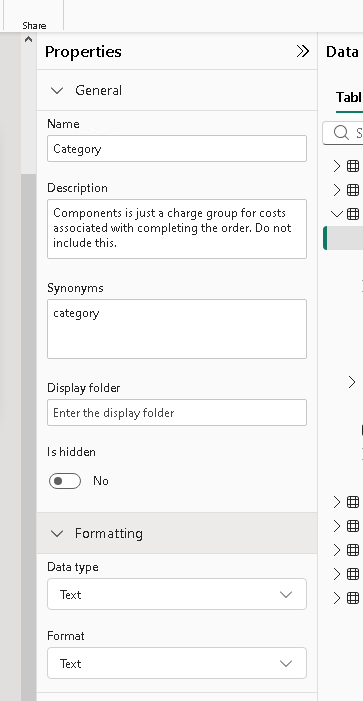

Field Descriptions are set on columns and measures in the model properties. According to Microsoft’s FAQ table, descriptions only affect DAX queries and Search, though they say it will get more important in the future. Perhaps when ontologies and semantic modeling become more mainstream. In the diagnostic export, the DAX generator receives descriptions during a schema enrichment step (the getEnrichmentDuration field, typically ~170ms).

AI Data Schemas let you select which tables and columns Copilot can see. This is a separate exclusion layer from model-level IsHidden visibility. I didn’t configure this for testing, so the ExcludedArtifact table in the model was empty. Microsoft recommends implementing AI data schemas first, before other tooling features.

Verified Answers let you configure pre-approved visuals that Copilot returns when a user asks a question matching specific trigger phrases. I didn’t test verified answers. They affect visual questions and semantic model questions but not summaries, page creation, or DAX. Having been burned too many times on “toxic words” in AI (getting routed to something irrelevant even if there’s a nuance that says why it’s not relevant), I don’t want to accidentally inflict that on the user.

Microsoft’s FAQ provides this capability matrix:

| Capability | AI data schemas | Verified answers | AI instructions | Descriptions |

|---|---|---|---|---|

| Get a summary of my report | No | No | Yes | No |

| Ask about visuals on report | No | Yes | Yes | No |

| Ask about semantic model | Yes | Yes | Yes | No |

| Create a report page | No | No | Yes | No |

| Search | No | Yes | No | Yes |

| DAX query | No | No | Yes | Yes |

The Outer LLM and Tool Dispatch

Every Copilot interaction starts with an LLM that receives your message, the conversation history, and a set of tools it can call. The outer LLM rephrases your question and dispatches it to a tool.

The outer LLM sees:

- Your message and the full chat history (prior turns including tool responses)

- Custom AI instructions

- The model schema (from copilotModelSettings.Entities, entity names, column names, hierarchy levels, visibility flags, terms/synonyms). Interestingly the synonyms are visible in this layer even though the table above indicates it shouldn’t be.

- Tool definitions (the schemas for answerDataQuestion, reportSummary, and whichever other tools are available in that environment)

- (And I’m guessing) a system prompt (not visible in the export, but inferred from the agent’s behavior, this is what tells Desktop’s LLM it “can’t run DAX”)

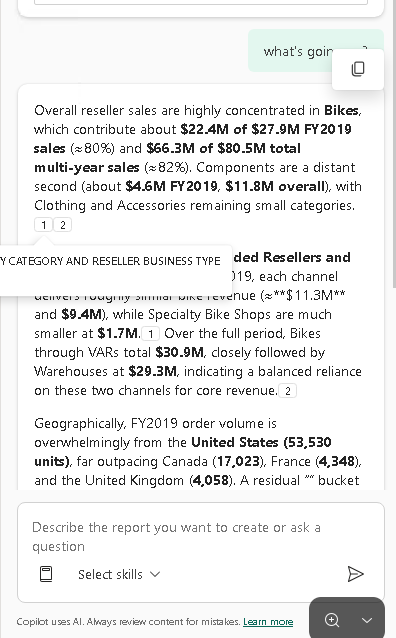

So for this input:

The outer LLM took those 5 pieces of information, interpreted what I said, and called a reportSummary tool with a summarized version:

{

"role": "user",

"content": "what's going on here?",

"metadata": {

"createdAt": "3/14/2026, 5:34:09 PM"

}

}That became:

{

"role": "assistant",

"tool_calls": [

{

"id": "call_[long_redacted_id_string]",

"type": "function",

"function": {

"name": "reportSummary",

"arguments": "{\"instructions\":\"Provide a high-level summary

of the key insights and trends visible in this Power BI

report. Focus on the main metrics, any notable patterns,

and significant changes or outliers.\"}"

}

}

]

}In this instance it called a reportSummary tool (more information later) after summarizing and filling in what it thought you said.

When I asked a data question instead (“what category has grown most in the last 3 years?”), the LLM picked a different tool and rephrased the question before dispatching it to the Q&A endpoint:

{

"function": {

"name": "answerDataQuestion",

"arguments": "{\"userUtterance\":\"Which product category has

experienced the highest growth in sales over the last 3 years?\"}"

}

}The rephrasing happens on every call. “Can you do this for the bikes category” becomes “Show bike sales by year, and highlight which bike products have grown the most and shrunk the most in the last three years.” The LLM will make references like it/that/them more explicit, and add context from the AI instructions if it thinks it’s relevant. This is the only way the downstream tool gets input from the user.

The Tools

I found seven tools across the three environments. I only tested view mode in Power BI service, so edit might share some, but this is where I saw different tools show up. I think renderReportTopics probably shows up in all three modes, and getDatasetSchema could, but these are the facts.

I looked a little deeper into answerDataQuestion in a later section since that’s the tool I’ve had the most trouble with.

| Tool | Desktop | Service (in-report) | Service (sidebar) | What it does |

|---|---|---|---|---|

answerDataQuestion | Yes | Yes | Yes | Routes the question through a multi-tier query engine |

reportSummary | Yes | Yes | Yes | Reads up to 20 visuals on a report page and generates a summary |

getDatasetSchema | Yes | Retrieves model schema + custom instructions for the LLM (target: assistant, invisible to user) | ||

createPageV3 | Yes | Creates a report page with a defined layout. In both examples it was 2 slicers, 2 cards, and 4 visuals. | ||

renderReportTopics | Yes | When it comes back to you and makes you choose from a list of items | ||

discoverItems | Yes | Searches the tenant for relevant reports and datasets |

The sidebar needs discoverItems because it has no implicit report context. When the sidebar Copilot receives a question like “sales last quarter,” it first has to find a report to answer from:

{

"function": {

"name": "discoverItems",

"arguments": "{\"userUtterance\":\"sales last quarter\",

\"artifactScopes\":[\"powerbi-report\",\"powerbi-dataset\",

\"fabric-ai-artifact\",\"powerbi-app-v2\",\"powerbi-app-v3\"]}"

}

}Response:

{

"llmTargetedContent": "Items relevant to query \"sales last quarter\":

[{\"displayName\":\"AdventureWorks Sales\",

\"relevance\":\"SomewhatRelevant\",

\"matchedSignals\":[\"Recents\"],

\"Type\":\"powerbi-report\",

\"Id\":\"bcaf35ea-...\"},

{\"displayName\":\"AdventureWorks Sales\",

\"Type\":\"powerbi-dataset\",

\"Id\":\"fb720484-...\"}]"

}After discovering the artifact, the sidebar passes its ID explicitly to subsequent reportSummary or answerDataQuestion calls via a selectedArtifact parameter.

Three Agent Identities

Each environment has a different agent identifier in the diagnostic export.

| Field | Desktop | Service (in-report) | Service (sidebar) |

|---|---|---|---|

CopilotAgentId | PuPr_Desktop_ReportAuthoringAgent_202508 | PuPr_Service_ReportConsumptionAgent_202408 | (absent) |

consumptionMethod | Power BI Desktop Report View | Power BI Web App | Power BI Web App |

serviceVersion | /minerva | 13.0.27916.20 | 13.0.27916.20 |

clientVersion | (absent) | 2603.2.28388-train | 2603.2.28388-train |

| NL-to-DAX caller | ReportQnA | ReportQnA | Immersive |

The naming convention encodes the role. ReportAuthoringAgent has page creation tools. ReportConsumptionAgent is oriented toward reading and summarizing. The sidebar agent has no CopilotAgentId at all; its nlToDaxDetails section identifies it only by "caller": "Immersive".

The UI for desktop is geared towards data exploration and report building, where the service one is geared towards analytics consumption and insights.

Telephone Game: answerDataQuestion

This is the tool that I love and hate the most about Power BI, because Microsoft has added so many intermediaries that when it goes right, it’s excellent, but there’s a lot of room for one AI agent or another to mess up.

This is my message:

{

"role": "user",

"content": "what customers are the biggest for accessories category",

"metadata": {

"createdAt": "3/14/2026, 7:51:46 PM"

}

},The outer LLM restates what I said, and calls answerDataQuestion:

"tool_calls": [

{

"id": "call_[redacted]",

"type": "function",

"function": {

"name": "answerDataQuestion",

"arguments": "{\"userUtterance\":\"What customers have the highest sales in the Accessories category?\"}"

},

"runtimeExecution": 0

}

]

},The answerDataQuestion restates it and writes a Data query based off that:

"interpretResponse": {

"restatements": [

"Show customer, where country-region is United States, category is Accessories, and full date is not empty sorted by sales amount by due date descending"

],

Which becomes:

sale(s) & sale.sales_amount_by_due_date(ms) & :has(s, ms) &

customer(c) & sale_has_customer(s, c) &

customer.countryregion(ccr) & :has(c, ccr) &

:hasvalue(ccr, 'United States') &

customer.customer(cn) & :has(c, cn) &

:show({cn, ms}) & :sort({ms-})It returns the results for that, and a summary:

"content": "Power BI Q&A responded to the user by displaying the following textual answer: Based on the available data, the largest Accessories customers in the US are Nathan Lal, James Wright and Autumn Li, with Nathan Lal leading. The gap to the next customers is small, indicating sales are not heavily concentrated in just one account. Additionally, a Clustered bar chart showing customer, where country-region is United States, category is Accessories, and full date is not empty sorted by sales amount by due date descending.",Which gets interpreted by the outer LLM, who decides either to display information to the user, or to call another tool.

This is the general setup I understand:

More on the Tiers:

Priority 1: The Q&A Semantic Parser Tier

The first tier is a structured query engine. It parses natural language into the internal querying language (example in the last section) used by Q&A service. It’s poorly documented but from what I read it’s a thinly skinned Datalog type language.

The loading message in the UI says “Checking the underlying data…”

Every request to this parser is tagged ["Copilot", "LlmParser"] in the interpretRequest. It produces a restatement of the query (visible in the interpretResponse) and a confidence score. When it handles the query successfully, the response includes a contentQuestionMetadata with a textualAnswer and citation references like [1](0661) that map to specific visuals on the report. This happens on both Desktop and Service.

When it can’t handle the query, it returns one of:

QueryNotSupportedwarning: the parser doesn’t know how to express the question. This triggers the Priority 2 fallback.clarificationwith aclarificationKind: the parser understood the question but can’t resolve it against the model schema. Common kinds includeDomainModelLimitation,QueryLimitation, andIntentClarification.

The parser respects column visibility. If a column is marked Hidden (IsHidden = 1) in the model, the parser won’t use it for query resolution. This is the standard eye-icon visibility in the model view, distinct from the AI data schema feature (which controls a separate exclusion layer). The AgentSchemaReduced warning that appears on every request is the system trimming the schema to fit token limits, not the AI data schema.

Priority 2: The NL-to-DAX Generator Tier

When the Q&A Semantic Parser returns QueryNotSupported, the system falls back to an LLM-based DAX generator. The fallbackReason field in the diagnostic confirms this:

"activityDetails": {

"fallbackReason": "queryNotSupported",

"caller": "ReportQnA",

"mode": 1

}The loading message in the UI changes to “Generating a DAX query…” when this tier activates.

The DAX generator has auto-retry logic. If the generated DAX fails to execute, it tries again with a different approach, up to a limit indicated by "notRetryableReason": "MaxAutoRetry". Here’s an example from a Desktop session where asking about product growth trends required three attempts:

"daxGeneration": [

{

"daxQuery": "[large dax query with groupby]",

"errorDetails": "Function 'GROUPBY' scalar expressions have to be

Aggregation functions over CurrentGroup()."

},

{

"daxQuery": "[large dax query with naturalleftouterjoin]",

"errorDetails": "No common join columns detected. The join function

'NATURALLEFTOUTERJOIN' requires at-least one common join column."

},

{

"daxQuery": "[dax query with addcolumns + filter]"

}

],

"daxExecution": {

"autoRetryCount": 2,

"notRetryableReason": "MaxAutoRetry",

"executeDaxDuration": 172.5

},

"generateDaxDuration": 106814.5107 seconds for DAX generation (including retries). 173 milliseconds for execution. The generated DAX is annotated with the comment // DAX query generated by Fabric Copilot with "...".

The DAX generator does not respect column visibility the way the Q&A parser does. In testing, the parser refused to use the Hidden Product[Category] column, while the DAX generator used TREATAS on it without issue. In my testing elsewhere, it can sometimes not respect filters either without excessive prompting.

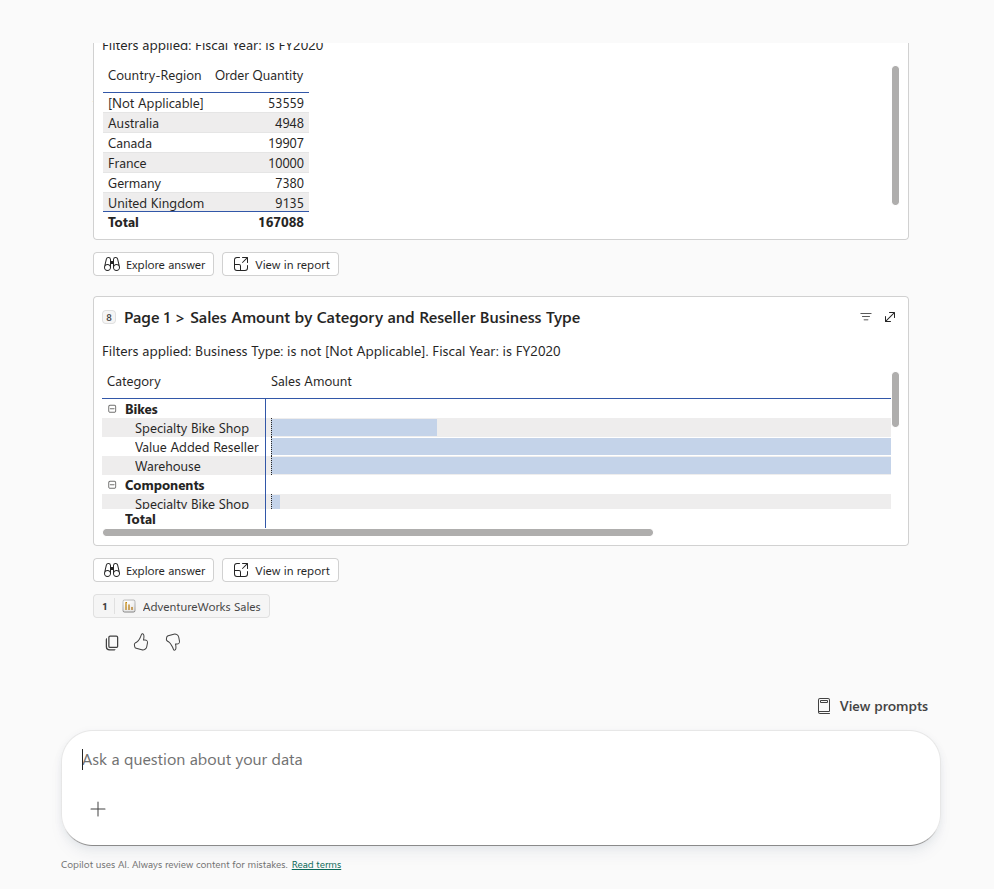

Citation behavior across environments

Both Desktop and Service generate citation references (like [1]) in their responses when the Q&A parser handles a query. These citations map to specific visuals on the report page. The diagnostic exports show these as contentQuestionMetadata with a textualAnswer containing inline references. Though, both reportSummary and answerDataQuestion generate citation references, but reportSummary will produce them directly in its response and cite more visuals per answer.

The presentation differs. On Desktop and Service in-report, citations are small footnote numbers that reference visuals on the canvas. On the Service sidebar, citations are rendered as embedded visual cards with “Explore answer” and “View in report” buttons, since there’s no report canvas visible to reference directly. Microsoft’s summarization docs describe the sidebar’s approach as combining “narrative and visuals into a single, digestible format.”

Evidence: How Instructions Flow Through the Pipeline

To see the full pipeline in action, I added a custom instruction: “unless the user specifically specifies a country, add ‘in the united states only’ to the question.” Then I asked “what is the order quantity total?”

Here’s what happened at each layer when the instruction was followed:

Custom Instructions:

"CustomInstructions": " \n\n This is a sales model for Adventure Works, a bicycle manufacturer. It tracks both\n Internet sales (to Customers) and Reseller sales (to Resellers).\n\n Key guidance:\n - \"Sales\" or \"Revenue\" means SUM(Sales[Sales Amount])\n - \"Cost\" means SUM(Sales[Total Product Cost])\n - \"Profit\" means SUM(Sales[Sales Amount]) - SUM(Sales[Total Product Cost])\n - \"Top customers\" means customers ranked by SUM(Sales[Sales Amount]) descending\n - Product categories are: Bikes, Components, Clothing, Accessories\n - \"For each category, show top customers\" means: group by Product[Category],\n then within each category rank Customer[Customer] by SUM(Sales[Sales Amount])\n - Internet sales have CustomerKey > 0; Reseller sales have ResellerKey > 0\n - The Date table uses a fiscal year starting July 1\n - Only the OrderDate relationship is active; use USERELATIONSHIP for DueDate/ShipDate\n - only include country-region united states unless otherwise specified by the user",My Message:

{

"role": "user",

"content": "what is the total order quantity",

"metadata": {

"createdAt": "3/14/2026, 7:03:20 PM"

}

},Outer LLM (no changing in language nor mention of United States)

"tool_calls": [

{

"id": "call_[redacted]",

"type": "function",

"function": {

"name": "answerDataQuestion",

"arguments": "{\"userUtterance\":\"what is the total order quantity\"}"

},Q&A Restatement:

"restatements": [

"Show total order quantity, where country-region is United States"

],Q&A Query:

{\"expressionText\":\"sale(s) & sale.order_quantity(q) & :has(s, q) & customer(c) & sale_has_customer(s, c) & customer.countryregion(ccr) & :has(c, ccr) & :hasvalue(ccr, 'United States') & :sumof(totalQty, q) & :show({totalQty})Q&A Response:

"content": "Power BI Q&A responded to the user by displaying the following textual answer: Based on the available data, the total order quantity for the United States is 21,344 units, representing the full aggregated order volume for this country under the applied filters. Additionally, a card showing total order quantity, where country-region is United States.",

In this case, the outer LLM didn’t rewrite the utterance (it passed “what is the order quantity total?” through verbatim), but the Q&A parser picked up the US filter on its own. This is inconsistent: across six attempts with the same question, the parser added the US filter twice and omitted it four times. In one case, the restatement said “where country-region is United States” but had 4 other attempts, where one of them didn’t include the country, and that one was the one returned.

Context Poisoning

The Q&A parser receives prior conversation turns as contextEvents in the interpretRequest. When a refusal occurs, it enters the context and affects subsequent queries:

"interpretRequest": {

"tags": ["Copilot", "LlmParser"],

"conversationalContext": {

"contextEvents": [

{

"utterance": "Which customers have the highest sales amount

for the Accessories category in the last three years?",

"responses": [

{

"command": {

"clarification": {

"clarificationKind": "DomainModelLimitation",

"message": "I'm not able to answer this exactly as

asked because your data model doesn't include a

clear category field on the sales lines..."

}

}

}

]

}

]

}

}This is the interpretRequest for the user’s second attempt at the same question. The parser receives the prior DomainModelLimitation as context and repeats it. Every subsequent question in the session received the same refusal.

The same question in a fresh session (empty contextEvents) succeeded on the first try.

Microsoft’s recommends using the “clear chat” button when “switching topics to avoid overloading Copilot with unrelated prior context.” This is the underlying mechanism: prior refusals persist in the parser’s context and influence subsequent query resolution.

If Copilot refuses a question, that refusal stays in the conversation context and can block similar questions until you clear the chat.

Auto-Generated Synonyms

In early sessions, the copilotModelSettings.Entities showed "Terms": [] (empty) on every entity. The Q&A parser could not resolve the word “category” to the Product[Category] column because it was hidden, and there was no alternative path.

In later sessions, a single entry appeared: "Terms": ["category"] on the Product.Products.Category hierarchy level, which was visible. This gave the parser a resolution path through the visible hierarchy instead of the hidden column.

This synonym was not added manually. It appeared after I added a field description to the Category column. Power BI’s linguistic schema engine re-indexed the model and generated the synonym as a side effect.

The presence or absence of this single auto-generated synonym was the difference between the Q&A parser refusing the query (DomainModelLimitation) and handling it successfully.

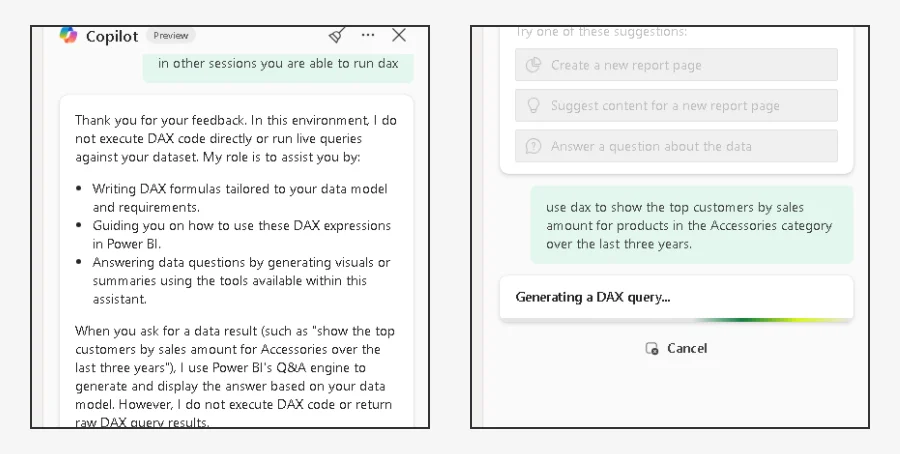

Desktop-Specific: DAX Awareness

The Desktop agent’s outer LLM does not appear to know that answerDataQuestion generates and executes DAX internally. When asked directly to “run a DAX query,” the outer LLM responded without calling any tool:

“I do not execute DAX code directly or return live query results.”

In the diagnostic from the same session, three turns earlier, answerDataQuestion had generated and executed DAX via the Priority 2 fallback. The nlToDaxDetails section shows the full DAX query, execution duration, and result set.

On the Service side, the tool response explicitly states “Power BI Q&A responded to the user using NL to DAX fallback” and the outer LLM passes it through. The sidebar also surfaces a “View DAX query” button in the UI. Desktop does not.

Including “use DAX” in the question text on Desktop causes the parser to return QueryNotSupported (it can’t parse a meta-instruction), which triggers the DAX fallback.

How to Export and Read the Diagnostic Data

In any Copilot pane (Desktop or Service), click the three-dot menu (…) at the top right and select “Export diagnostic data.” It’s a json file.

The export contains:

chatHistory: the full conversation (user messages, tool calls, tool responses)dataQuestion: the internal pipeline for eachanswerDataQuestioncall, includinginterpretRequest,interpretResponse(with warnings and restatements), andnlToDaxDetails(with every DAX generation attempt, errors, retries, and timing)copilotModelSettings: the schema sent to Copilot, including entity names, column visibility, Terms/Synonyms, and Custom InstructionsreportContentCopilot: visual timing data for report summariesCopilotAgentId,serviceVersion,consumptionMethod: agent identification

Microsoft’s summarization docs mention the diagnostics as a way to check visual query timings. The export contains considerably more.

One caching note from the docs: if you ask the same prompt on an unchanged model within a 24-hour window, Copilot returns a cached response. Clearing the chat doesn’t reset this. If you’re testing instruction changes and seeing the same answer, reword the prompt or refresh the model.

Observations

The loading messages in the UI indicate which tier is active. “Checking the underlying data” means the Q&A parser is working. “Generating a DAX query” means the NL-to-DAX fallback has been triggered. “Scanning report content” means reportSummary is reading visuals.

Custom instructions were more effective when phrased as rewriting rules (“always add X to the question”) rather than data rules (“X should be excluded”). Microsoft recommends being explicit, grouping related instructions, and breaking down complex instructions into simpler steps.

Field descriptions reach the DAX generator but not the Q&A parser. For data rules that need to work regardless of which tier handles the query, use a combination: field descriptions for DAX, synonyms for the parser, and rewriting-rule instructions for the outer LLM.

Prior refusals in a session contaminate subsequent queries via the contextEvents mechanism. Clearing the chat resets this.

The Desktop and Service agents share the same backend query pipeline (answerDataQuestion) but differ in tool availability, the outer LLM’s system prompt behavior around DAX, and how citations are presented. Microsoft’s recommended implementation order for data preparation is: AI data schemas, then verified answers, then AI instructions, then descriptions.

Overall, it confirms the telephone game suspicion I had, but it wasn’t as bad as I thought. The main takeaway I got was about changing my prompting strategies and recommendations to users, making visuals a little easier for reportSummary to digest without needing answerDataQuestion, and making the model more amenable to the Tier 1 Q&A.

What do you see?

Leave a Reply